Amazon Security Lake Integration

This article provides guidance for customers who wish to integrate Vectra Detect for AWS with Amazon Security Lake.

Introduction

Detect for AWS offers advanced Threat Detection & Response coverage for your AWS control plane. We leverage AI & ML techniques to monitor all activity in your organization’s global AWS footprint for malicious behaviors. Detect for AWS leverages CloudTrail logs and contextual IAM information to find malicious activity, and then attribute it to the malicious actor itself using Vectra’s Kingpin technology.

Amazon Security Lake is a data lake for security logs, built in the customer’s AWS account. The data lake is backed by an S3 bucket and organizes data as a set of Lake Formation tables. Core to the Amazon Security Lake mission is simplifying the storage, retrieval, and consumption of security logs through application of a common schema. The Open Cybersecurity Schema Framework (OCSF) is a collaborative open-source effort between AWS and partners.

Vectra supports integration with Amazon Security Lake and the OCSF. Vectra’s integration allows feeding of any new Vectra account scoring events to a custom source Amazon Security Lake S3 bucket. This enables customers using Amazon Security Lake to have a high quality source of behavior detection in Detect for AWS, along with any other security event Amazon Security Lake they may be ingesting.

Requirements

An active trial or existing licensing for Detect for AWS

A configured data source for Detect for AWS

A Vectra SaaS API client with minimum permissions of:

View – Accounts

Edit – Notes

Access to Amazon Security Lake

An Amazon Security Lake Custom Source

Joining Vectra Detect for AWS

Existing customers of Vectra Detect for AWS with a valid license are supported. If you do not already have a license for Detect for AWS, a trial license is also supported. To begin a free trial of Vectra Detect for AWS please follow the link. The Vectra Detect for AWS Deployment Guide on the Vectra Support portal will walk you through deployment.

Creating a Vectra Detect for AWS Data Source

Full details are available in the Vectra Detect for AWS Deployment Guide (including troubleshooting steps) but below is a short summary of steps. Vectra enables automated deployment via a CloudFormation template but also details manual steps if desired:

Creating a Detect for AWS connection in the Vectra UI.

Creating an SNS topic (or using an existing one) to notify Vectra when new logs are available to retrieve.

Creating a role in your AWS account to allow Vectra to retrieve logs from the S3 bucket where your CloudTrail logs are located.

Providing Vectra with the AWS role ARN, S3 bucket location, and SNS topic ARN.

Vectra will subscribe the SNS topic to your S3 Bucket after submitting these.

Creating Vectra SaaS API Client

To create a Vectra SaaS API client:

Log in to your instance and navigate to Manage > API Clients.

Click “Add API Client”.

Give your client a name and a role that allows for at least “View – Account” and “Edit – Notes” permissions.

Roles can be seen in Manage > Roles.

The following built in roles contain the necessary permissions:

Admin, Restricted Admin, Security Analyst, Setting Admin, and Super Admin.

Additional documentation about the SaaS API can be found in the following articles:

Creating an Amazon Security Lake Custom Source

Before ingesting into a custom source, that source must be registered with Amazon Security Lake using the console or API method. Amazon Security Lake will assign a unique prefix within the Amazon Security Lake S3 bucket in the customer’s account, which will prevent collision with other sources.

Please follow the Collecting data from custom sources documentation from AWS for creation of the Amazon Security Lake Custom Source for use with Vectra’s Detect for AWS data. Vectra’s CloudFormation template will ask for the S3 bucket and unique prefix of the Amazon Security Lake Custom Source you have created before the template can be run. Please keep in mind the following when creating the custom source:

In the “OCSF Event class” dropdown, select “Security Finding”.

For the “Account ID”, please enter a delegated administrator Account ID that has rights to write logs and events to the data lake.

Running the Amazon Security Lake Integration Deployment Script

Vectra’s CloudFormation script will create several resources:

An execution role based on the service-role "AWSLambdaBasicExecutionRole".

An event trigger which will be used to invoke the lambda every 5 minutes.

Appropriate permissions to allow the lambda to be run by the scheduled trigger.

A lambda layer containing the AWS Wrangler library for python 3.9.

A DynamoDB Table to keep the state of the lambda executions.

A Lambda execution role with appropriate permissions on S3 and DynamoDB.

The actual lambda function which talks to the Vectra SaaS API to do the actual accounts enrichment.

The following link will take you to CloudFormation and open a Quick create stack page where you can fill in the details. If you are not already logged in to AWS, you will need to log in. The script will default to running in US-West-2 and must be run from US-West-2.

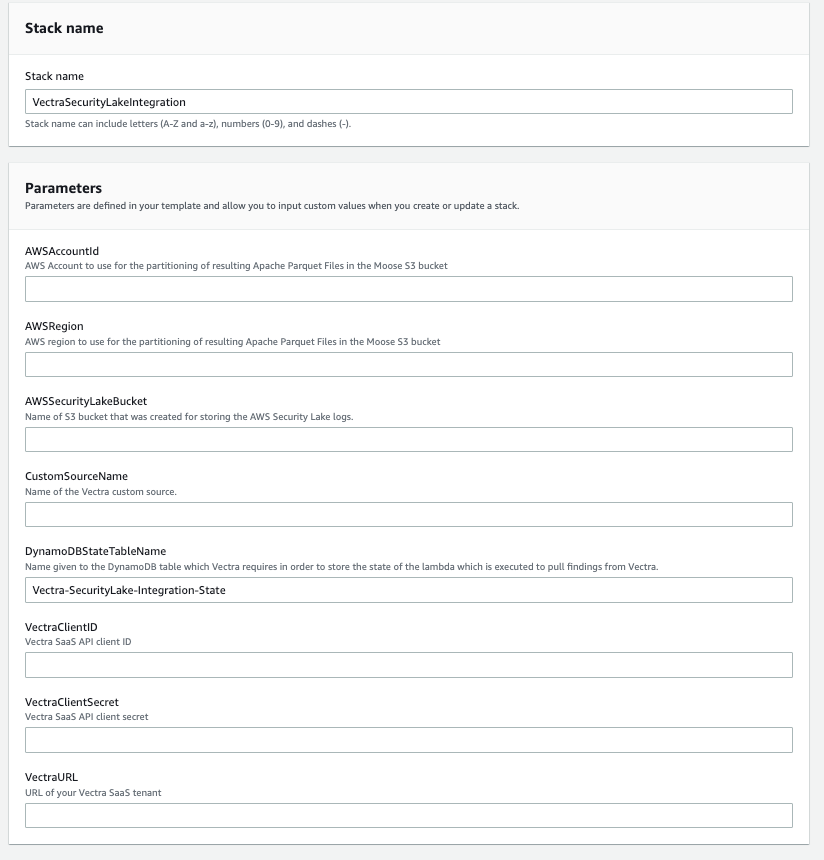

On the next page you will see an example of the stack configuration page you will need to complete. Keep in mind:

The stack name is pre-set but can be changed if you desire.

The Vectra URL, ClientID, and ClientSecret refer to your Vectra SaaS instance that you will retrieve the data from.

The Vectra URL should be formatted as follows: https://123123123123.uw2.portal.vectra.ai

The ClientID and ClientSecret should be pasted exactly as you copied them from the Creating Vectra SaaS API Client step previously.

The AWSAccountID and AWSRegion are used for partitioning of data in your Amazon Security Lake instance. Think of these being used like directory names.

The AWSAccountID must be 12 digits.

The AWSRegion should be formatted as follows: us-east-1 (use your appropriate region)

The AmazonSecurityLakeS3Bucket and AmazonSecurityLakeS3BucketPrefix should match what you created for the custom data source in Amazon Security Lake for Vectra.

When done filling out the form, create the stack to begin ingesting Vectra Detect for AWS data into Amazon Security Lake.

OCSF Field Mapping Table

Field

Details

activity

Set statically to "Generate" as this always corresponds to a new finding

activity_id

Set statically to "1" as this is the ID corresponding to "Generate"

category_name

Set statically to "Findings" as we're generating a security finding

category_uid

Set statically to 2 corresponding to "Findings"

class_name

Set statically to "Security Findings" as we're generating a security finding

class_uid

Set statically to 2001 corresponding

finding.title

Set statically to "Generate"

finding.src_url

Point to the URL of the impacted account on the Vectra SaaS UI

finding.uid

Points to the account ID of the impacted account on the Vectra SaaS UI

finding.supporting_data.assignment

User to whom the account has been assigned for investigation (if any)

finding.supporting_data.last_detection_href

URL pointing to the last detection on this account on the Vectra SaaS UI

finding.supporting_data.last_detection_type

Type/model of the last detection alerted on this account

finding.supporting_data.last_detection_id

ID of the last detection alerted on this account

finding.supporting_data.active_detection_types

Array of all active detections on this account

message

Type of alert, typically "Account Host Score Change"

metadata.version

Set statically to "1.0.0" on the first iteration of this integration

metadata.product.lang

Set statically to "en" as Vectra only exists in English

metadata.product.uid

Unique identifies of the Vectra SaaS UI

metadata.product.vendor_name

Set statically to "Vectra AI"

metadata.product.name

Set statically to "Vectra Detect"

time

The original timestamp of the event from the Vectra brain

severity

Severity of the account, as in the Vectra quadrant, between "Low" and "Critical"

severity_id

Severity score of the account, based on the Vectra account score, between 2 and 5.

status_detail

Whether this is a score increase or decrease.

state_id

Set statically to 1, as alerts will always be about new events

type_uid

Set statically to "200101" corresponding to "Security Finding: Generate"

type_name

Set statically to "Security Finding: Generate"

Worldwide Support Contact Information

Support portal: https://support.vectra.ai

Email: [email protected] (preferred contact method)

Additional information: https://www.vectra.ai/support

Last updated

Was this helpful?